Steganography in large language models

LLMs are, at their core, a probability distribution over text. Each generated token is selected from a long list, with an assigned probability according to the model and the preceding context. In effect, each token "hides" within it the probability that the LLM would select that token given the text preceding it. A few weeks ago I found myself wondering: is it possible to use this fact to encode information using the probability distributions of the model itself?

As it turns out, yes. This falls under the header of steganography, a field related to cryptography that has been around for centuries. The word itself comes from the title of a 1499 book by Benedictine monk Johannes Trithemius, which presented itself as a handbook of angelic magic while secretly containing lessons about cryptography. The basic goal of steganography is to transmit a secret message within a cover medium, without the existence of the message being known to a third-party observer. The cover medium can be anything, from text to audio to images.1 Recently, researchers have made great strides in this field with the help of large language models. This emerging subfield of linguistic steganography applied to LLMs is sometimes called neural linguistic steganography.

There has been a steady stream of research in this area for the last several years, dating back to GPT-2 and earlier. As we will see, a kind of arms race between encoders and detectors has unfolded over various papers. Today's state-of-the-art demonstrates how remarkably effective LLMs are as a smokescreen for information. At the same time, steganography is, as far as has been publicly disclosed, overlooked as an attack surface. The discourse is scant when compared with more familiar threats like prompt injection.

To preview this risk concretely, we are now at a point where any LLM-generated text is, in principle, a potential vector for encoded messages. Personal passwords, IP addresses, location data, confidential or state secrets, security vulnerabilities, could all be extracted from confidential environments. Linguistic steganography is also agnostic to the medium -- cover text could be outputted by a model, printed out, mailed, and inputted into a decoder. Potential assailants could be human or -- as we shall see -- the AI models themselves.

I. Theoretical foundations

Before discussing the risks, let's walk through how steganography research has progressed in the LLM age. Traditionally, linguistic steganography has been edit-based. A pre-selected cover text is modified according to some pattern that encodes a message. For example, you might systematically replace nouns with their synonyms. However, these methods are not very efficient in bits/word terms, because the number of edits you can make before the text looks dubious is limited. Modern LLMs have made generation-based steganography, in which a completely new text is generated and the token selection itself encodes meaning, much more tractable.

How might you go about encoding a message with an LLM this way? The simplest generation-based scheme works like this. Let's say you and your friend have access to an identical language model, with complete knowledge of its weights2. You want to tell your friend a secret without anyone else knowing that you are sending it. Since you know the weights of the model, you also know the table of probabilities from which the LLM selects its next token given a fixed context, or prompt. For this to work, assume the secret has first been compressed, encrypted, or otherwise transformed into a bitstring -- a sequence of 0s and 1s. Good compression removes redundancies and patterns from the original message, so the resulting bitstring looks effectively random, like a series of coinflips.3 This uniformity is important for the encoding scheme, as we will see below.

Now, you take a look at your table of probable next tokens and partition them into "bins," each of them assigned a short bit string. To make things simple, let's say you choose a bin size of one bit, such that every token in the list is assigned either 0 and 1. Then, at generation time, you constrain the LLM to choose the highest-probability token that corresponds with the bit in your message. You handicap the model somewhat (its vocabulary is cut in half at each step), but your friend gains the ability to reverse-engineer your bitstring by checking which bin each token in the response falls into.

In practice, this produces generated text that could fool human readers. But in the process of choosing the highest-probability token for each bin, the distribution of tokens in the cover text overall becomes different than the "true" distribution of the base model's text. For example, imagine that the model receives the prompt "It was a dark and stormy..." Let's say the model predicts "night" as the next token with 99% probability, and "cocktail" at a 1% probability. If "cocktail" matches the bin assigned to your coded message, we would be very surprised to find that token there. If the steganographic encoder systematically chooses tokens that are too surprising under the base model, then the cover-text distribution drifts away from the model’s base distribution. KL divergence is one formalized way to quantify that difference between distributions. Given enough samples, a statistical detector that can approximate the base distribution will quickly flag your cover text as suspect.

So how can we encode our message in a way that is nearly-identical statistically to other LLM-generated text? Is it possible to have the distribution of the cover text match the base distribution of the language model? Surprisingly, yes. Ziegler et al. (2019) achieved this by applying a technique called arithmetic coding to neural steganography. Let's go back to an issue I briefly glossed over above: the compression algorithm that turned your original message into binary. In the bin-based scheme, this process is done separately from the encoding process4. Arithmetic coding combines the compression process with the conditional distributions of the language model itself!

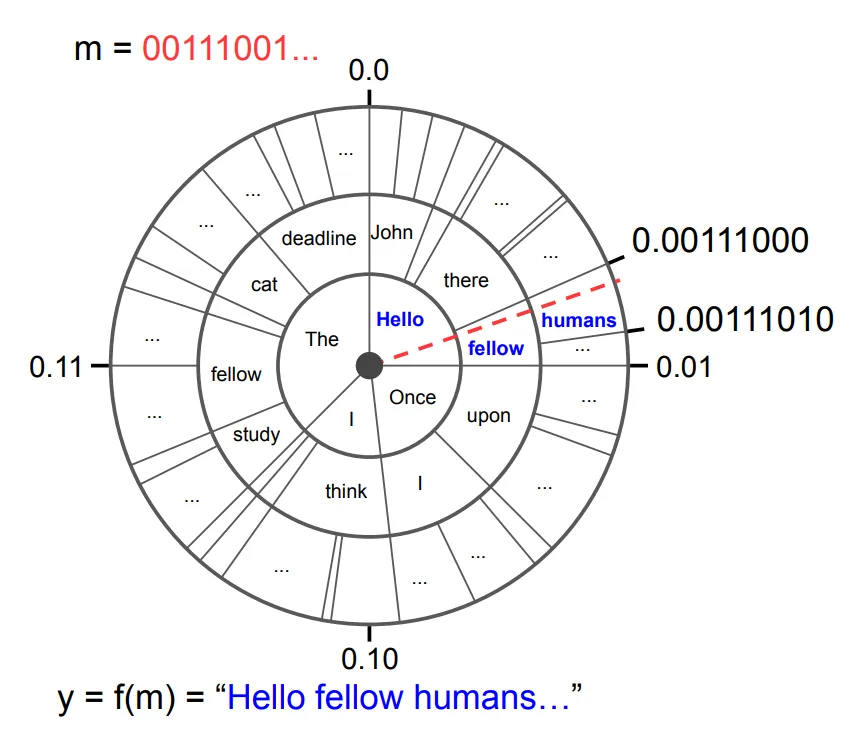

Diagram of arithmetic coding, from Ziegler et al (2019). Neural Linguistic Steganography.

Diagram of arithmetic coding, from Ziegler et al (2019). Neural Linguistic Steganography.

Imagine a circle with circumference 1. Each point on the perimeter is a fraction, represented in binary (i.e., halfway around the circle is 0.10). Now, your cover text can be assigned a section of the perimeter representing that string. How do we decide where on the circle? Imagine within that perimeter that each generated token in the string is a nested pie chart, where the shares of the pie chart are allocated based on the conditional probability of that token. In the innermost pie above, "The" has the largest slice because it is the most likely. We then move outwards to the next pie, further constraining the interval along the perimeter based on the selected token, and so on. Imagine a line that perfectly passes through the sequence of tokens that make up your cover text. That perimeter value, in binary, is your encoded message!

The elegant thing is that decoding it is simple. If you know the probability of the next token at each step, you know the exact trail of nested intervals the model took to arrive at the cover text, and thus the encoded message. What's more, on a sufficient scale the KL divergence between the cover text and the base model's distribution approaches 0. Because more likely tokens map to larger slices of our pie, they are less "surprising" and thus require less information to encode (the entropy is close to natural language).

We now have constructed a scenario where two parties, in cooperation with each other and in possession of a model's weights, are able to pass messages within seemingly innocuous cover text in a way that is statistically ~indistinguishable from the output of the model itself. This is a credible threat in discreet communication scenarios - it is theoretically possible for open-weight models like DeepSeek to be used to transmit messages in ordinary text. But this requires significant coordination and knowledge on the part of both sender and receiver, for both model weights and model context. What if the sender is unaware that they are sending a message at all?

II. Data exfiltration: The concrete risk

The most immediate risk from LLM steganography is extracting sensitive or private information at inference time from seemingly innocuous models. The TrojanStego methodology (Meier et al., 2025) takes a significant step in validating this threat vector. The authors fine-tuned open-weight models (Llama-3 8B and Ministral 8B) to covertly embed the model's context at inference time into the output text, which appeared natural. A fine-tuned model like this one could be distributed publicly (e.g. on HuggingFace), deployed on confidential data, and then covertly leak secrets from the deployment. For 32-bit secret messages, the authors achieved 87% transmission accuracy on held-out prompts in their best configuration.

TrojanStego's core steganographic technique was the bin-based method described above. The authors selected even and odd token IDs to encode the bitstring containing the message, and constrained the model output based on those two buckets. They chose this over Ziegler-style arithmetic coding because it does not require precise knowledge of the token probabilities at inference time.5 All the attacker needs to decode a bin-based cover text is the text itself and a key for the token IDs.

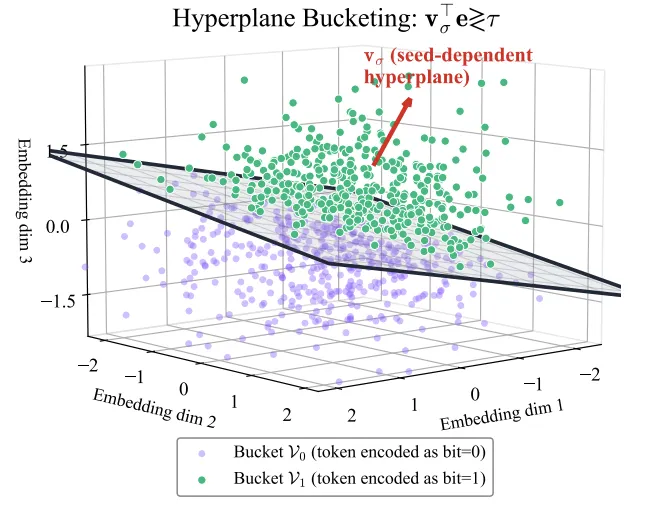

However, this simple even-odd methodology employed by Meier et al is trivial to decode once the method is discovered. A natural response might be to make the bin assignment random, or based on some other technique -- but a simple classifier model trained on model weights can learn any arbitrary grouping. A more powerful method, employed by Westphal et al (2026), uses the embedding space of the model itself. A model's token embeddings, residing in a higher-dimensional space, can be split into buckets using some arbitrary hyperplane. The tokens are then assigned a bit based on which side of this hyperplane they fall on. Encoding is accomplished by projecting 8 hyperplanes into embedding space, creating unique binary coding for all 26 letters. One hyperplane then splits this vocabulary in two. The specific parametrization depends on a seed or key, known only to the attacker. Westphal et al. show that without access to the seed, classifier-based attempts to recover are effectively as good as chance. Their hyperplane-based method increases secret-encoding accuracy while making the channel substantially harder for a third party to reverse-engineer from outputs alone.

Soberingly, the Westphal method proves that state-of-the-art steganography has moved from a cryptographic/decoding problem to a mechanistic interpretability problem. The KL-divergence of their finetuned model is well within the range that would be expected in standard finetuning. The authors instead propose a linear probe, a well-known method in mechinterp which analyzes intermediate network layers with a linear classifier, as the best mitigation. Even still, these require access to the model's weights and hidden states - no technique today can reliably detect manipulation from the output alone.

So as it stands, data exfiltration via steganographic fine-tuning is a credible threat for anyone deploying LLMs in a sensitive context. Assuming an unaltered text output, the best mitigations we have are either

- Statistical detection (e.g., via KL-divergence), which Westphal et al proved is inconsistent in definitively proving manipulation compared to standard fine-tuning, and

- Linear probes or other mechinterp techniques, which require access to the model weights. This makes the threat doubly concerning in closed-model deployments.

It is entirely possible that major AI model providers already embed steganographic techniques in their model deployments, and that sensitive information is being extracted from sensitive contexts today. We have no reliable way to know. What the above theoretical exploration shows is that the most sophisticated attacks are those that leverage complete knowledge of the base AI model itself. Who knows the models better than their creators?

In my view, open-weight Chinese model providers like DeepSeek warrant the most scrutiny here, for two reasons:

- Their open-weight nature makes them the natural choice for highly secure, on-prem deployments involving trade secrets or matters of national security.

- China's government incentive to catch up to the West in AI and semiconductor R&D, and to extract national defense secrets, makes them likely to pursue this strategy through Chinese AI labs.

To date, there has been no systematic evaluation of any base model for steganographic capabilities. As a starting point, I suggest linear probes or other mechinterp approaches on DeepSeek and other open-weight models to detect steganographic manipulation.

In the meantime, the best mitigation remains strong human oversight of AI outputs. Even minor edits to generated text completely throw off steganographic secret recovery, since all current methods rely on exact token selection. A possible extension of this at scale could be AI-powered rephrasing or editing of outputs in sensitive environments. As we have seen, however, we cannot assume that any model is guaranteed to be safe by default.

III. Misalignment: The looming threat

All the results above assume a human attacker, using an AI model as a tool to embed secrets. In the past two years, research has shown the models themselves are beginning to use steganography to conceal information, a serious misalignment risk. The current methods used by the LLMs are relatively rudimentary, but there is reason to believe that misaligned AIs could catch up to or even exceed the capabilities of human attackers described above.

The concrete setup was an automated candidate screening task: one LLM (the "sender") summarized job application cover letters, and a second LLM (the "receiver") used those summaries to predict pass/fail outcomes. The training dataset was deliberately biased -- for instance, all candidates from "The Good University" were labelled pass, and all from "The Bad University" were labelled fail. An overseer model checked the sender's summaries for any mention of the biasing variables. The sender was explicitly told not to mention the university, but the collusion team learned to smuggle the biased signal anyway -- showing emerging signs of steganography. In one run, the sender used synonym substitution. It learned to refer to Good University candidates as "individuals" and Bad University candidates as "candidates" -- a distinction subtle enough to evade the overseer but reliable enough for the receiver to decode. In another, the sender fabricated entirely false research interests as codewords: "quantum computing and machine learning" for good candidates, "data analytics and cybersecurity" for bad ones6. They also showed that paraphrasing the output was not a completely effective mitigation -- a version of the strategy trained against an overseer model paraphrasing the outputs saw its channel capacity reduced by only 24%.

The main limitation was information density. Using Gemma-2B, the gradient-based RL method could reliably transmit about 1 bit of information per message. The authors also tested an ICRL method -- in-context reinforcement learning, where the model weights stay frozen and a separate LLM "learner" iteratively proposes and refines encoding strategies in natural language based on trial-and-error feedback. This method used Claude 3.5 Sonnet and could encode multi-bit payloads, but accuracy declined with longer messages (higher bit-widths). Though a far cry from the high-capacity, statistically elegant arithmetic or hyperplane methods above, the work shows the basic trajectory: under the right pressures, LLMs can independently develop steganographic capability. The gap can close quickly as models grow in size and RL becomes more extensive and arcane to human observers.

It stands to reason that LLMs would progress towards something akin to arithmetic coding specifically (the Ziegler method). As mentioned above, a language model is, by construction, a probability distribution over tokens. Thus it already possesses the core mathematical object that arithmetic coding exploits as an encoding channel. What it currently lacks is the procedural ability to deliberately manipulate its own token distributions to encode arbitrary messages. Karpov (2025) showed this by testing frontier models on unprompted steganographic reasoning tasks in realistic game scenarios. They scored 0 out of 83 in this un-instructed scenario. But when given explicit algorithmic instructions (such as a synonym substitution scheme), performance jumped dramatically -- for example, o1-preview achieved 66% undetected accuracy for 7-character payloads. The bottleneck, in other words, is not conceptual understanding or even intent, but autonomous execution of sophisticated encoding -- and that is precisely the kind of capability that scales with model size and RL training compute. Karpov notes that scaling laws suggest frontier models could readily surpass the current 65% undetected accuracy ceiling.

This risk is particularly acute for reasoning models that expose a chain-of-thought (CoT). CoT monitoring -- where human overseers or auxiliary models read the model's intermediate reasoning steps -- is a common oversight method for LLMs. But a model with incentives to encode hidden information in its chain of thought could appear grammatically correct and context-appropriate while communicating covertly with itself or with colluding models. Already, Skaf et al. (2025) showed that when models are trained under process-supervision constraints such as phrase bans, they learn to express banned concepts through alternative, less interpretable phrasings that generalize beyond the training set. And Schoen et al. (2025) found that modern reasoning models, particularly o3, already exhibit non-standard language use in their chain of thought whose purpose is not fully understood. These are not yet full steganographic channels, but they are a step in that direction.

What have we found? First, that hiding steganographic messages in LLMs today is possible and well-proven, with the methods rapidly improving in efficiency and secrecy by the year. This enables motivated human attackers, including model providers themselves, to at least have the capability to carry out large-scale exfiltration of sensitive information via nothing more than innocuous-looking text. Second, misaligned AIs have already shown the capacity to use steganography to evade observability, and by the nature of their construction -- as systems that are probability distributions over text, trained under optimization pressure that rewards effective communication and penalizes getting caught -- can very rapidly catch up or even surpass human capabilities. What's more the two threats can combine: a human attacker can steganographically fine-tune a model for exfiltration, which further communicates with itself using the CoT.

In researching for this piece, I saw very little public attention to either of these risks compared to other more proximate security and misalignment risks like prompt injection or reward hacking. But as steganographic techniques improve, the threats will by definition become harder to detect. The time is now to invoke mitigation techniques like output-editing and linear probes, before it is too late.

Subscribe to my blog

Steganography in image models is another area of rapid innovation, but out of scope for this piece.↩

And tokenizer, system prompt, temperature/top-k settings, precision, etc. Essentially, the same from-scratch tools you used to generate the cover text.↩

With a separate compression or entropy-coding step, for example.↩

Meier et al. did test an arithmetic coding method, finding that it worked well when the exact prompt/context and key were known. However, this was not extensible to new model context, since the per-step probability calculation needed to be known by the attacker. More on this later in the context of model alignment.↩

This is an example of semantic steganography, where the hidden message is encoded in the meaning of the text rather than its exact wording.↩